|

I am currently a Ph.D. student (2025-2028) majoring in Electronic and Information Engineering at University of Science and Technology of China (USTC), supervised by Prof. Zheng-Jun Zha and Associate Prof. Jiawei Liu. Before that, I pursued my Master’s degree at University of Science and Technology of China (USTC) (2023-2025) and received my Bachelor's Degree from Northeastern University, majoring in Automation (2019-2023). My research interests include Multimodal Large Models, Multimodal Agents, and Agentic Reinforcement Learning (Agentic RL). Email: yongchaoxu@mail.ustc.edu.cn |

|

|

|

|

|

|

|

|

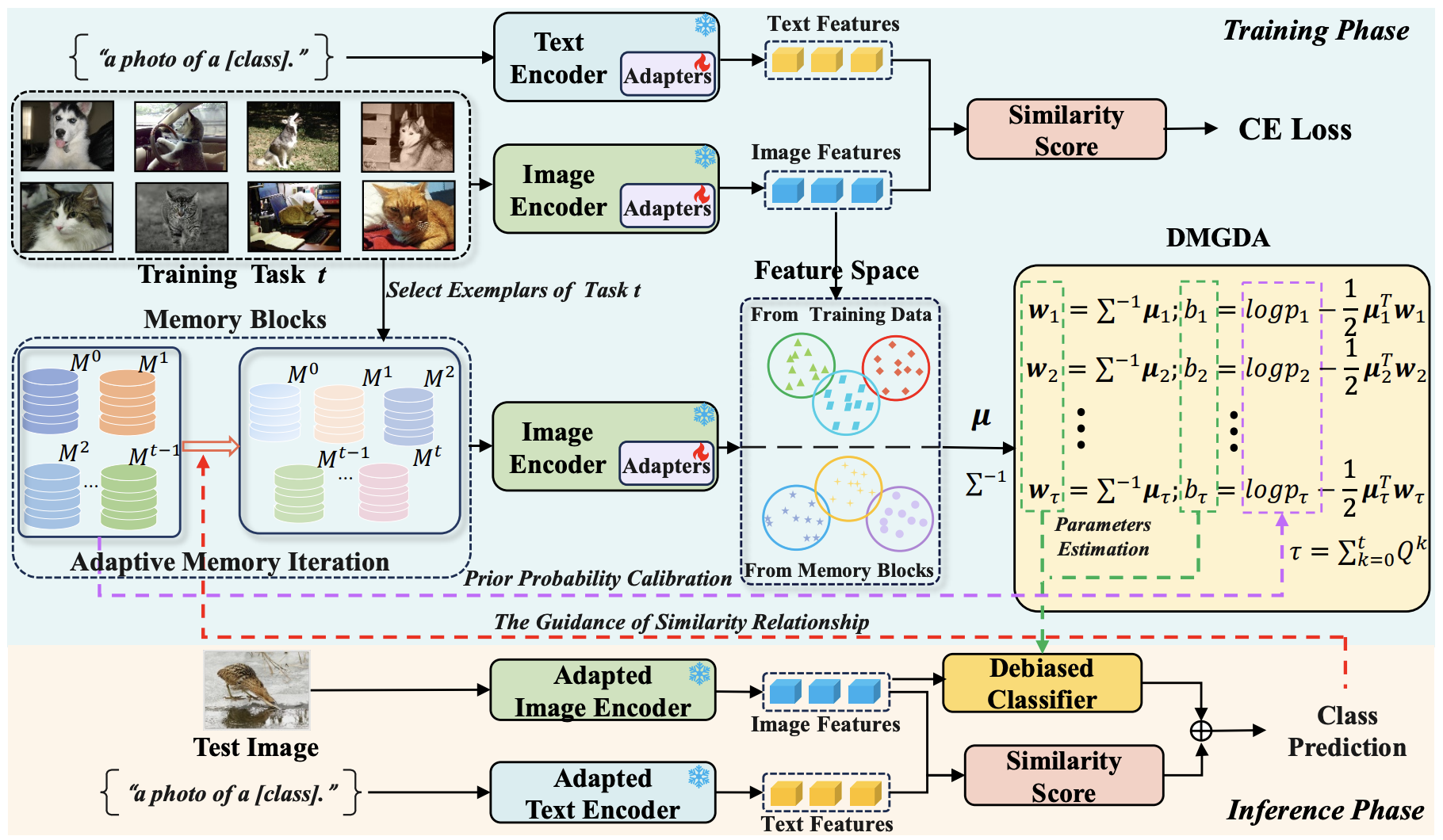

Sen Tao, Jiawei Liu, Yongchao Xu, Guangxi Wan, Peng Zeng Chinese Conference on Computer Vision and Machine Intelligence (CVM2026) , 2026 PDF Code We propose a debiased memory-calibrated Gaussian discriminant analysis method for VLM-based class incremental learning, which mitigates dynamic class imbalance in rehearsal and improves long-term knowledge retention with an adaptive memory iteration strategy. |

|

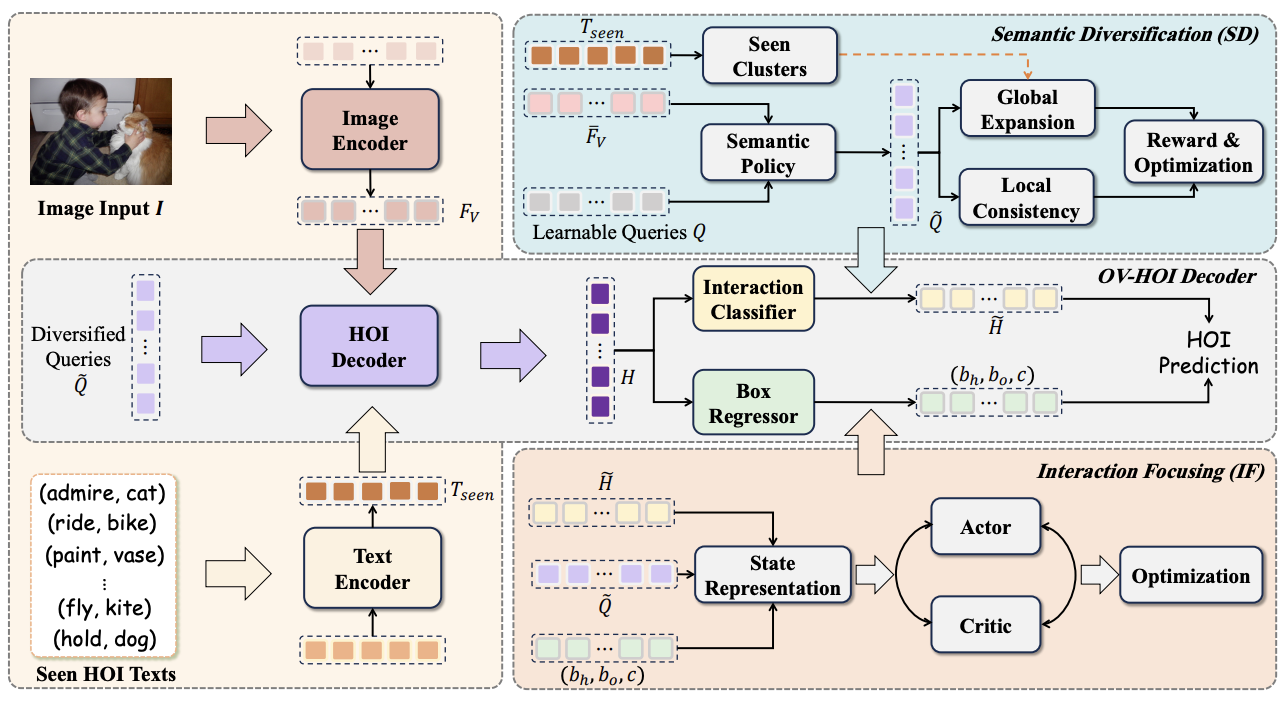

Yongchao Xu, Jiawei Liu, Junfeng Wang, Sen Tao, Na Jiang, Zheng-Jun Zha Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR2026 highlight) , 2026 PDF Supp Code We propose a semantic-diversified and interaction-focused framework for open-vocabulary HOI detection, which enhances generalization to unseen interactions while capturing more discriminative and spatially interpretable interaction cues. |

|

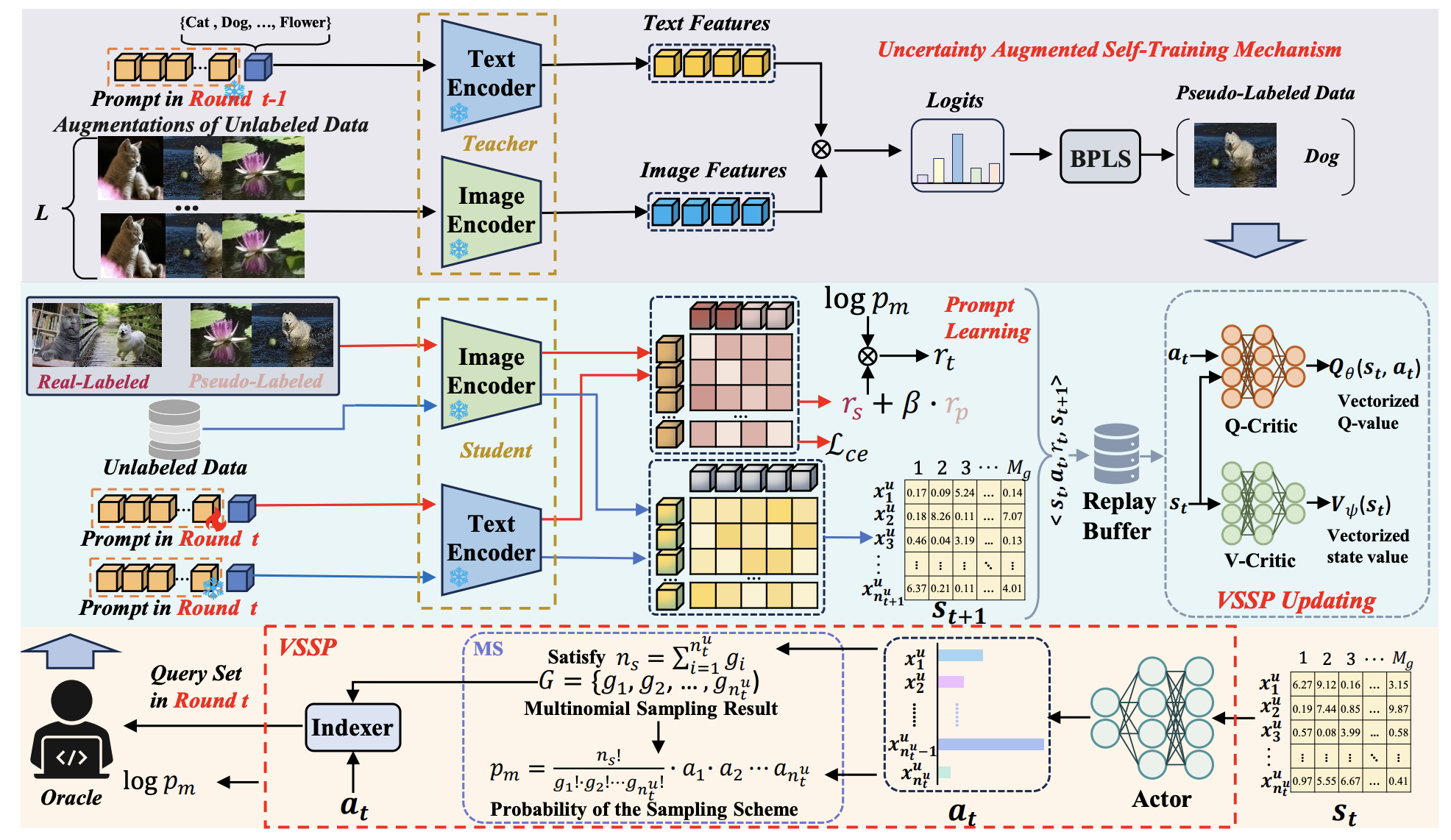

Sen Tao, Kaiduo Feng, Jiawei Liu, Peng Zeng, Yongchao Xu, Yufei Zheng, Zheng-Jun Zha International Conference on Learning Representations (ICLR2026) , 2026 PDF Code We propose a prompt-guided self-training sampling policy for active prompt learning, which improves sample selection by integrating soft actor-critic optimization with real-pseudo hybrid rewards and vectorized critics. |

|

|

Jiawei Liu, Yongchao Xu (co-first author), Sen Tao, Yuexuan Qi, Zheng-Jun Zha International Journal of Computer Vision (IJCV2026) , 2026 PDF Code We propose a comprehensive context learning framework for zero-shot HOI detection, which improves recognition by collaboratively modeling semantic and spatial interactions. |

|

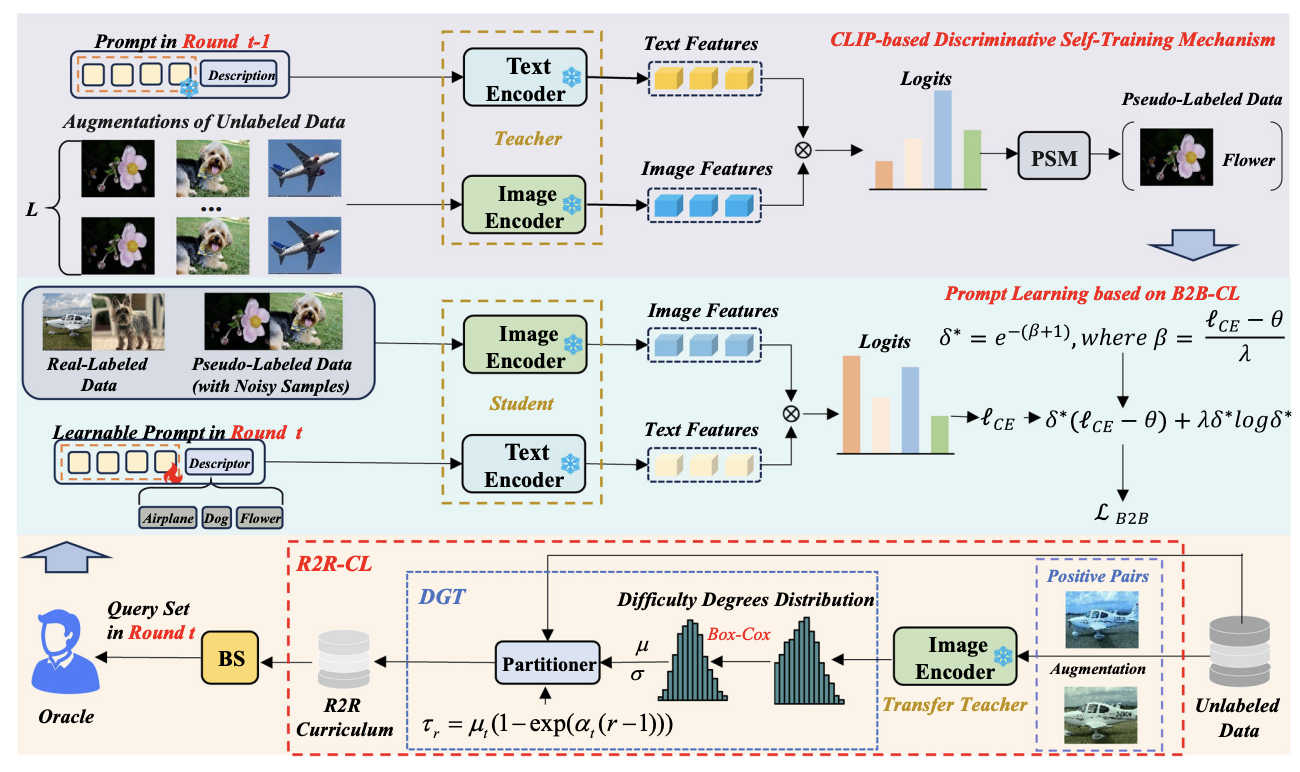

Sen Tao, Jiawei Liu, Peng Zeng, Yongchao Xu, Bingyu Hu, Zheng-Jun Zha International Journal of Computer Vision (IJCV2026) , 2026 PDF Code We propose a dual-curriculum active prompt learning framework that improves adaptation by progressively selecting informative samples and leveraging reliable pseudo-labeled data. |

|

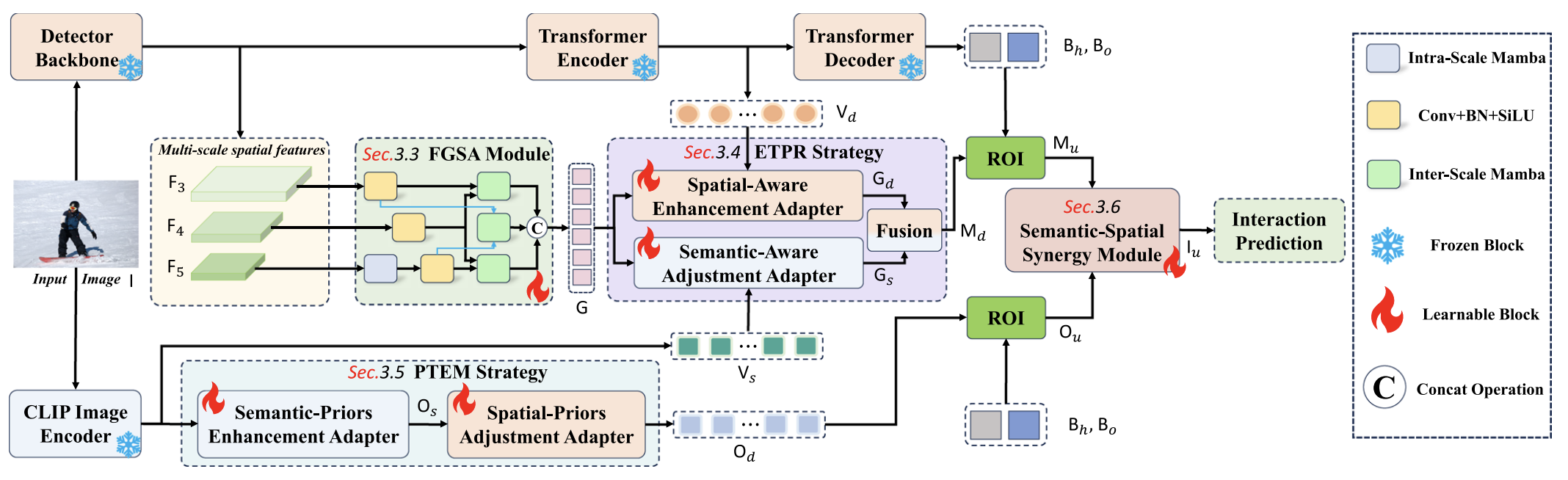

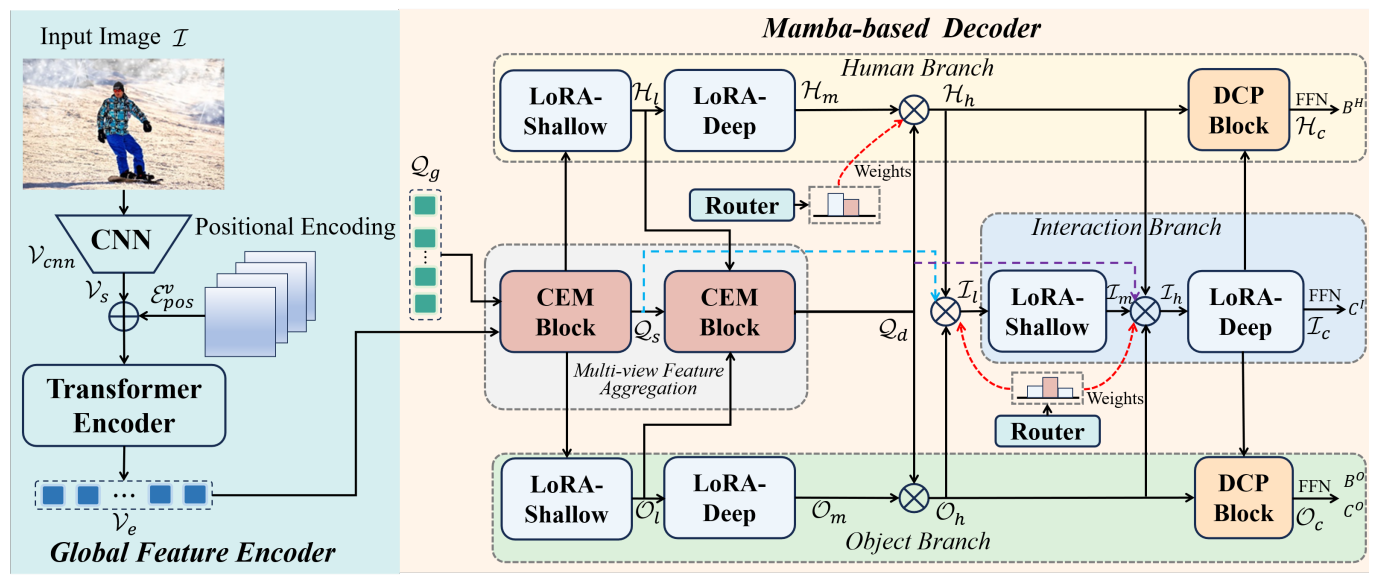

Yongchao Xu, Jiawei Liu, Sen Tao, Qiang Zhang, Zheng-Jun Zha The Thirty-Ninth AAAI Conference on Artificial Intelligence (AAAI2025) , 2025 PDF Code We introduce a novel HOI detection framework with a well-designed Mamba-based decoder to mine the benefts of existing methods and enhance the ability to recognize diffcult HOI samples. |

|

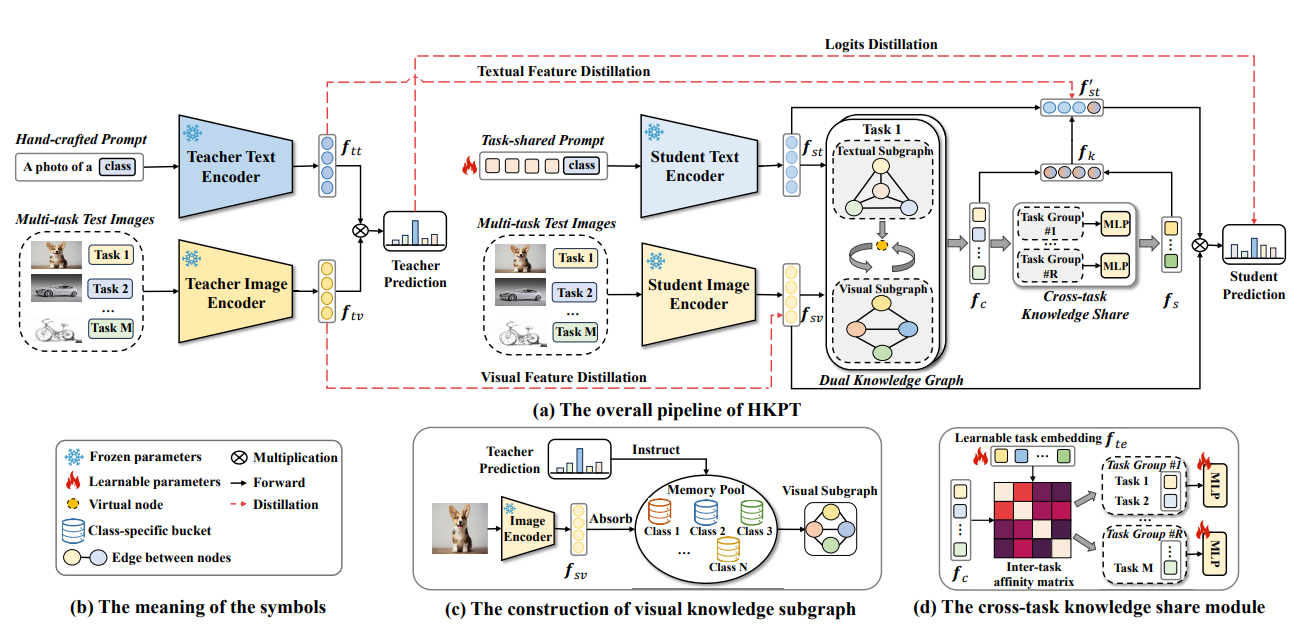

Qiang Zhang, Mengsheng Zhao, Jiawei Liu, Fanrui Zhang, Yongchao Xu, Zheng-Jun Zha Proceedings of the Computer Vision and Pattern Recognition Conference (CVPR2025) , 2025 PDF Code We propose the frst method to address the issue of multi-task test-time adaptation for pre-trained VLMs. It can also seamlessly migrate to basic single-task scenarios. |

|

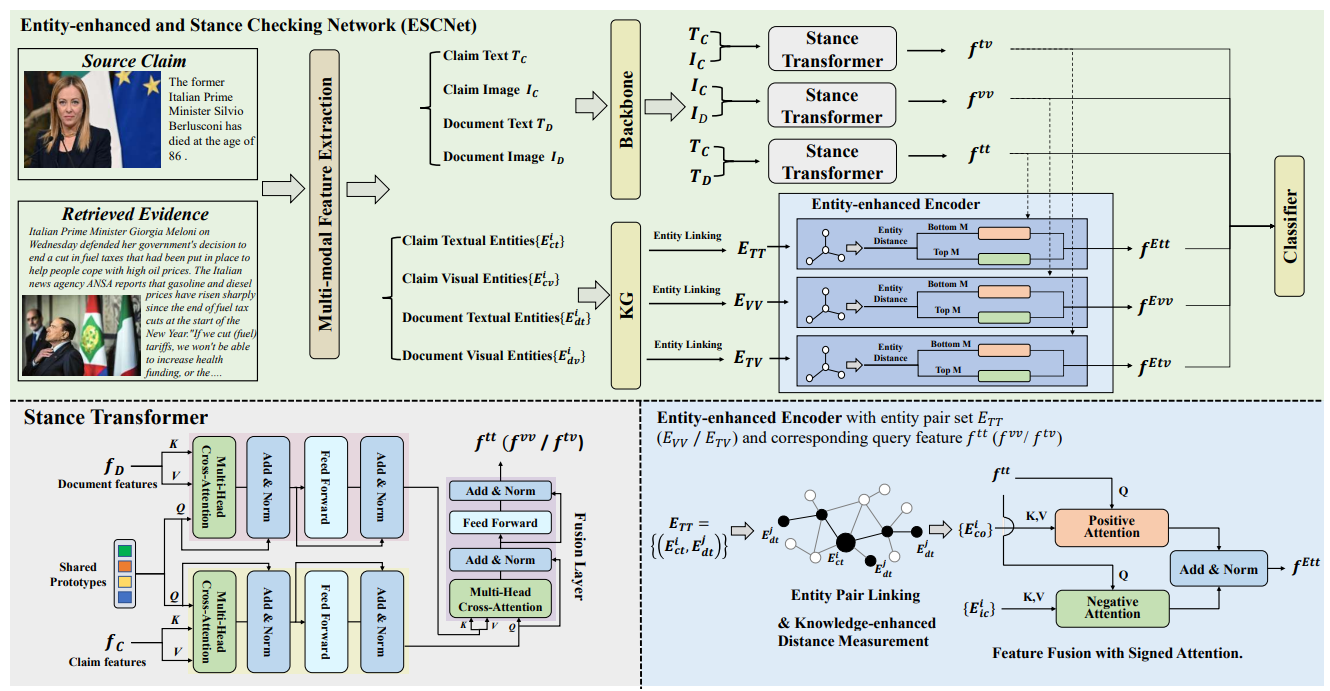

Fanrui Zhang, Jiawei Liu, Jingyi Xie, Qiang Zhang, Yongchao Xu, Zheng-Jun Zha International World Wide Web Conference (WWW2024) , 2024 PDF Code We establish the first large-scale, multi-domain Chinese multi-modal fact-checking dataset, encompassing all types of misinfomation in the multi-modal fact-checking task. |

|

|

|

|

|

|